Microsoft 365 Copilot – Is your data ready for takeoff?

With Copilot, Microsoft equips its users with a powerful GenAI tool that can make their daily work much easier. To achieve this, however, all preparations for the "flight" must be in place: from data quality and access rights to technical implementation. You can find out here which aspects of these topics you should pay particular attention to before you get started with Microsoft Copilot.

The AI turbines have started and the powerful Azure OpenAI engine is thundering away at its core. In the rear part of the cabin there is still a bit of hustle and bustle until all the passengers have found their seats, the seat belt signs light up. And finally, in the cockpit, as the captain, you ask the co-pilot the question: “Can you please create a PowerPoint presentation for me based on our last project meeting in Teams with the most important status updates?”

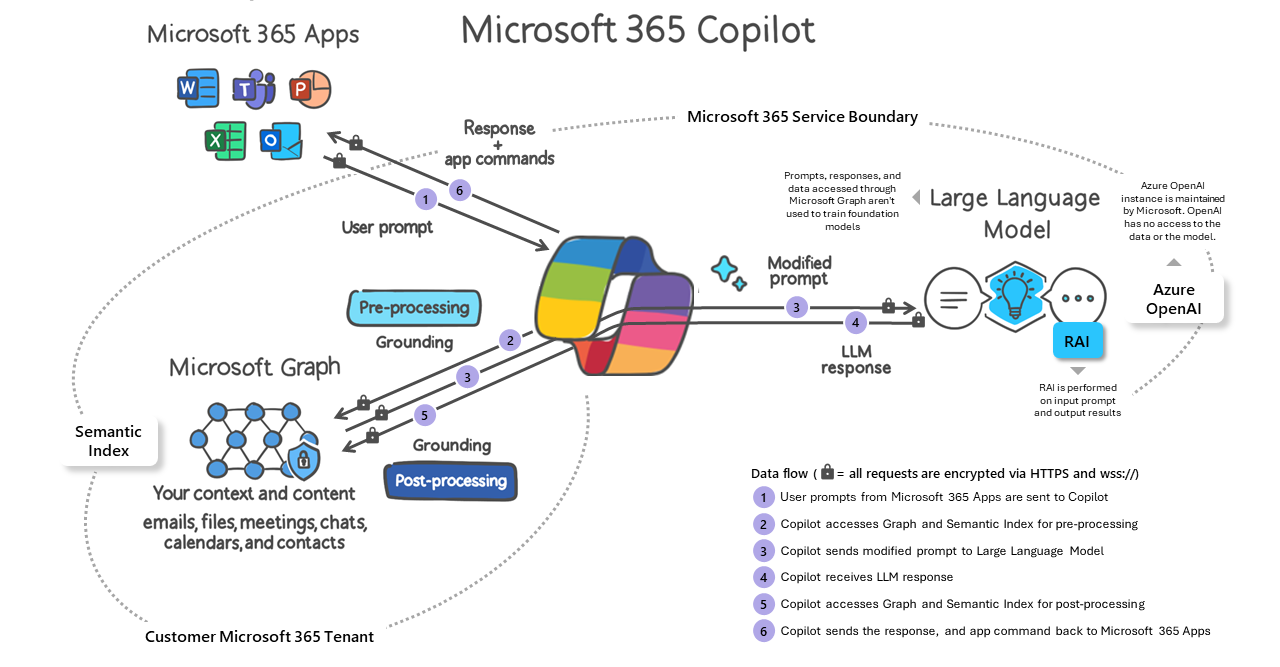

This or something similar is what the start of the working day could look like in the future. In November 2023, Microsoft introduced a new service Microsoft 365 Copilot to the market, which it claims will “boost users’ creativity, productivity and personal skills”. By using generative artificial intelligence, Copilot helps you to create and improve documents, presentations and emails in real time – based on your company’s data. In the process, Copilot always takes into account various aspects of the specific context, such as the topic, style, target group and also the quality of the content. The big advantage compared to other GenAI tools is the direct integration of ChatGPT’s large language models (LLMs) into the Microsoft 365 ecosystem. Apps such as Word, Excel and PowerPoint as well as Outlook and Teams can pick up your queries and the information generated by the AI directly and convert them into “digital artifacts”. The virtual Copilot is now also available for other services, such as process automation or content creation in the context of corporate communications. And all this takes place within the established security and compliance functionalities of your company’s own Microsoft 365 Tenants.

How Microsoft Copilot works

Will Microsoft Copilot make us superfluous?

No, because just as with regular air traffic, the final decisions are still up to the pilot, i.e., us users. Digitally, this is an assessment of whether the Copilot has collected the relevant information and linked it correctly. Is the result coherent, has everything been considered? And only once everything has been checked is it approved for launch.

Ready for takeoff? Carry out the check!

But what exactly should you check? Deploying Copilot is comparatively easy compared to other Microsoft 365 services. There are just a few technical preparations to be made by your IT department. The users are then assigned the corresponding licenses and the “aircraft” is theoretically ready to take off. But just like a real flight, the final organizational and safety instructions still have to be completed before departure.

The usage and benefits of Copilot depend heavily on the quality and organization of your data. Copilot uses the LLM based on your company’s data to provide you with relevant and personalized suggestions. If your data is unstructured, outdated, inaccurate or even inaccessible, Copilot will not be able to reach its full potential – just like an airplane with outdated technology and insufficient fuel should not be expected to take off. It is therefore important to ensure a secure and clean data landscape before you are on the runway with Copilot. Microsoft also identifies this topic as a primary focus area for preparation and refers to it as the company’s “information readiness”.

You should therefore check this before starting with Microsoft Copilot:

- What is your information architecture like? Is there a concept for this or has it grown historically and is due for revision?

- Who has access to which information? Who can grant or remove access and are these processes audited?

- How is information structured and classified? Is classification linked to data protection measures?

- How is the life cycle of information regulated from a process perspective? Who ensures the data is up to date and of high quality?

GenAI despite regulation?

Do you have sensitive company data that is not (yet) allowed to be stored in the cloud or in Microsoft 365? Comma Soft’s LLM solution offers you the opportunity to use generative AI in a securely hosted environment in which you retain full control over your data.

Please observe the safety instructions!

There are several fields of action to prepare your information for optimal and secure processing by Copilot. Of particular relevance are those in the area of governance, on which preparatory activities should focus. To help you get off to a good start, let’s take a closer look at the most important points:

Access rights

One of the most important areas is the structured and controlled allocation of access rights. Whether it’s self-managed memberships in Microsoft Teams, the uncontrolled sharing of documents or release links within the company (“oversharing”): this can all quickly give individuals access to data that should not actually be accessible to them. The AI will then also use this person-specific access to process the requests. Predefined and automated processes and regular audits can help here. In addition, precisely tailored access rights also increase the quality of the generated results. Information that is not considered relevant and therefore does not fit the context is not subject to analysis. This means that the outcome cannot be “diluted” or deliver false results.

Data classification

A company-wide data classification also helps to structure data and access. The classification can be assigned manually by the user or automatically using preset or user-trainable identification features. In conjunction with technical measures to protect against data loss (data loss prevention), it can be specified, for example, that information with a certain classification should not be shared or should only be shared with certain groups of people. This means that they are only used for AI-generated results by authorized users.

Data life cycle

It is especially important that information provided company-wide (e.g., company presentation, product descriptions, marketing information, etc.) is checked both initially and continuously for quality and currentness. When is information “ready” for the company? And when is it “outdated”? Automated processes that describe the life cycle of your data in the various phases help to ensure that your users, and therefore also Copilot, have access to accurate and useful information. From the creation and approval for publication through to archiving or deletion, predefined rules and responsibilities ensure a clean data flow.

All of these topics should generally be taken into account when managing data. However, the availability of LLMs gives AI-supported and automated information collection a whole new meaning. For this reason, appropriate adaptation or redesign measures relating to data management should be proactively reviewed and planned at an early stage.

If you are interested in Microsoft 365 Copilot, are looking for guidance on optimizing your information architecture or governance issues, please contact Dr. Jens Neuser and his colleagues directly: You can contact them here.